Dimitri Drobatschewsky was the most erudite man I ever knew. He spoke, wrote and read in German, French, and English. Born in Berlin and raised mostly in Luxembourg, his French and German were native, down to idiom, argot and accent. He was also conversant in Spanish, Italian and Polish (at least, he said, he knew several dirty jokes in Polish).

He was born in 1923, fled the Nazis with his family, joined the French Foreign Legion, deserted to fight with the Free French forces in Italy in World War II. Later, he became the classical music critic with The Arizona Republic, where he and I became friends.

(Once, when confronted by a musician who had gotten a bad review, he was challenged on his credentials. “What do you think is the most important qualification for a classical music critic?” the musician demanded. “Well,” Dimitri said. “He must have a long and unpronounceable name.”)

Because he was still a boy when moving to Luxembourg, he was able to learn French as a native. “I had to,” he told me. “French girls wouldn’t date you if you didn’t speak perfect French.” And that’s why, after the war, when he emigrated to the U.S., he kept his accent. “American girls loved a foreign accent.”

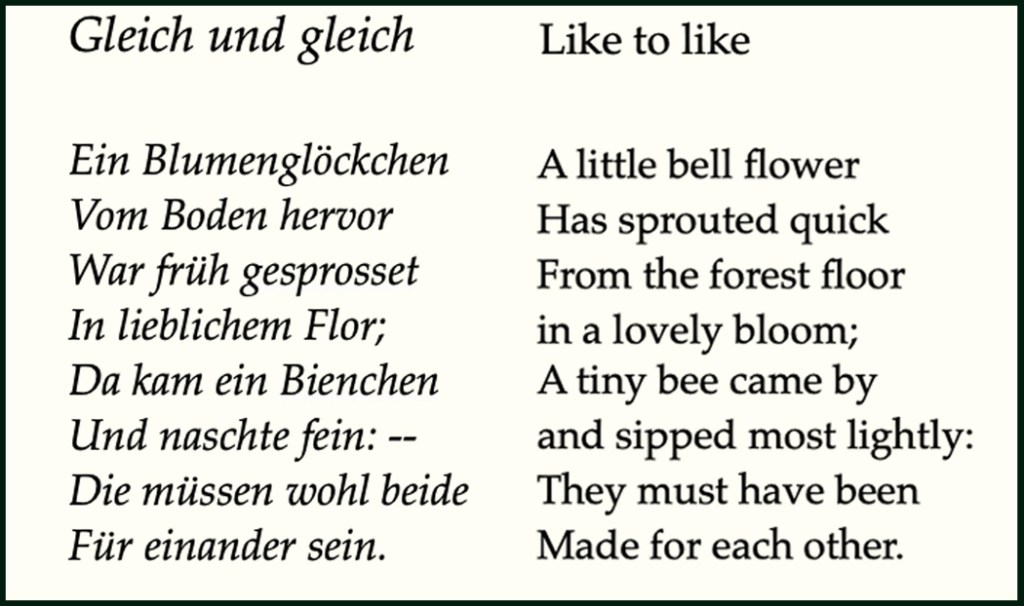

Dimitri felt that French was the most beautiful language, by the sounds it makes in your mouth. But for Dimitri, the best poetry was in German, and further, the greatest poet was Johann Wolfgang von Goethe. For this, I had to take his word.

Because I don’t read German, and when I approach Goethe in translation, he sounds earthbound, even banal.

I try to hear the German in my mind to catch its melody, but I am walled out by my English. All I can gain from the reading is a commonplace.

“Little rose, little rose, little red rose

Little rose of the heath.”

It sounds better when set to Schubert’s music, but still, in English, the words are a touch sappy, and the sentiment pedestrian.

“You have to read him in German,” Dimitri said. “The sound of words, the language is unbelievably beautiful.”

“Röslein, Röslein, Röslein rot,

Röslein auf der Heiden.”

So, I’m afraid Goethe is closed to me. I’ve read Faust several times in several translations, and it never seems to quite get airborne, yet everyone who knows the original feels it is one of the greatest works of literature ever, and that Goethe is the equal of Shakespeare.

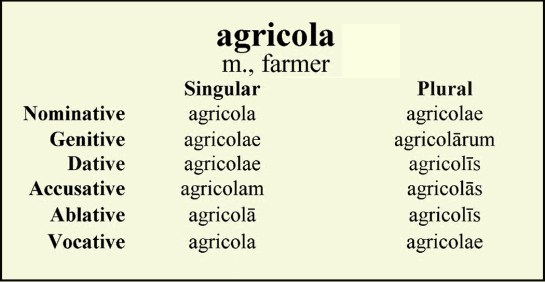

I have the same problem with Quintus Horatius Flaccus, or Horace, in English. In English, his poetry is flat as yesterday’s ginger ale. “You have to read him in Latin,” says my friend Alexander, whose degree is in Classical languages. “In Latin, he is truly exceptional — lapidary perfection.”

Again, I have to take his word for it. Shakespeare may have had “small Latin and less Greek,” but my Latin is even smaller than the Bard’s. I studied it in eighth grade, and mostly what I recall is “agricola.”

I freely confess it is my loss. But there it is; I am stuck with it.

There are those who hold that all literature is untranslatable, that you have to read it in the original language, and while I concede that you can never get all of a poem in a translation, nevertheless, I feel there is a class of work that functions perfectly well shapeshifted.

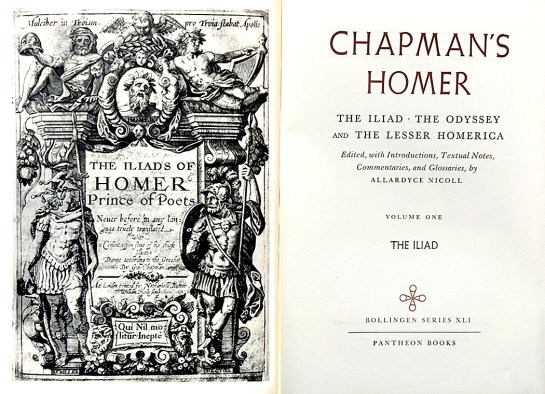

I can read my Homer not only in English, but in multiple translations, from Chapman to Pope to Fitzgerald to Fagles and I am sucked in by the poetry every time. It may very well be better in Greek, but it’s the best thing I’ve ever read even in English. I reread the Iliad once a year, and try to find a new translation each time. (I read the Odyssey, too, and I especially love the translation by T.E. Lawrence — Lawrence of Arabia. Who knew?)

The same thing happens with Dostoevsky. I’m sure it’s better in Russian, but even a good translation moves swiftly and powerfully and I am rapt by the story and moved by the humanity. There is a swift current underneath the surface of language.

It can make a difference which translation you read. I am told by those who know, that the Scott Moncrieff translation of The Remembrance of Things Past is closest to the quality of Proust’s French, yet I find his English stuffy and outdated. The newer translations — by a range of translators for Viking (Swann’s Way is translated by Lydia Davis) — is easier to digest and flows with the quickness that ensures pleasure in the reading. But am I getting the pith of Proust? My French is better than my German, but it is still small beer.

Constance Garnett gave us English versions of what must be every Russian novel ever written. She was a factory. And her versions are still the most widely read. But the more recent by Richard Pevear and Larissa Volokhonsky are much easier going. The duo now seem to be challenging Garnett also for the shear number of volumes converted.

Tolstoy’s War and Peace, in the English of Louise and Aylmer Maude, is the most profound and moving piece of literature I have ever read, despite the profusion of names. How much better would it read if I could understand it in Russian (and French, let’s not forget)? Its power transcends its tongue.

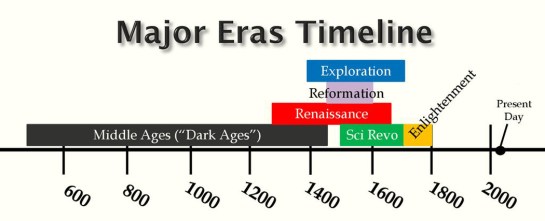

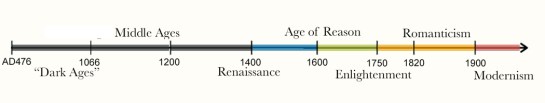

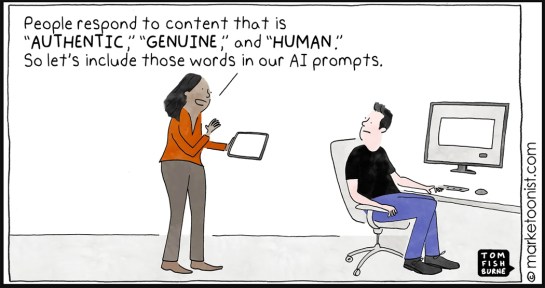

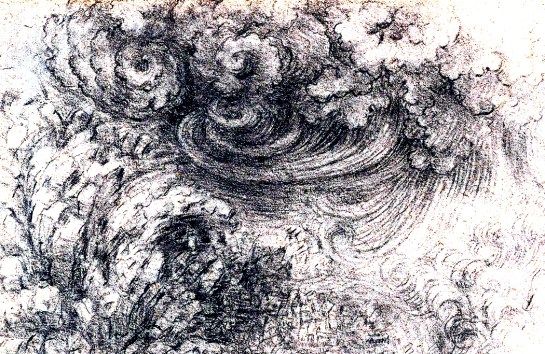

This all raises the question, however, of why Homer or Tolstoy can be read in translation and Horace cannot. And the reason, I believe, is that greatness in writing comes on two essential levels: content and style. That is, how deeply it connects with our human-ness, on one hand, and on the other, how deeply it connects with its medium. This is not an either-or situation; there should always be awareness of both sides. But in practice, one side or the other tends to predominate. The more it is the universal connection with life and experience that we read, the easier the literature can travel. The more it is the words themselves, the more insular the audience.

It would be difficult to illustrate this dichotomy if we try to look at examples of foreign literature translated to English; we would need to be conversant with the original language to see how it morphs in the conversion. But consider attempting to translate several English authors into some other language.

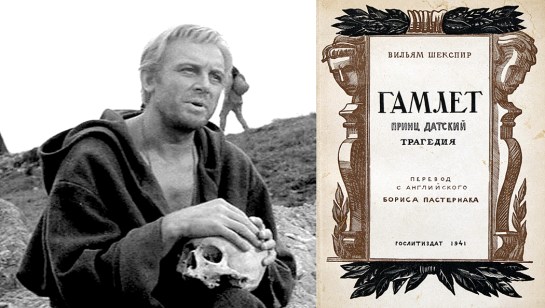

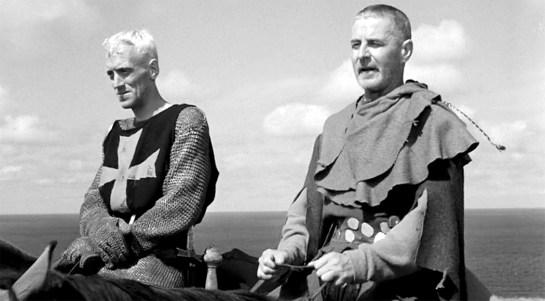

Shakespeare tends to travel well. His plays are valued in many lands and many languages. There are famous examples of Macbeth in Swahili, of Hamlet in Russian, and dozens of operatic versions in Italian, French and German. They all pack a wallop. And Shakespeare is loved in all those languages by their native speakers.

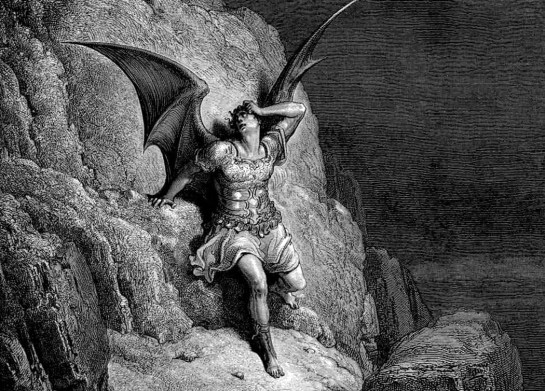

On the other hand, how in hell can you translate John Milton into French? You can tell the story of Paradise Lost, sure, but how can you convey the special organ-tone quality of his language.

“Round he throws his baleful eyes.”

Translate it into French and it comes out as the equivalent of: “He looks around malevolently.” Not the same thing, all the poetry is gone out of it. Deflated; a flat tire.

Or: “When I consider how my light is spent.”

It is only in English that the word “spent” has the two meanings: a spent taper; or money (or life) spent. The word in the opening of his sonnet “On his Blindness” has a nimbus of ambiguity about it. The primary meaning is that he is now blind, but he spreads the halo out from the word “spent” by following it up with several other financial words: “the one Talent which is death to hide” where a talent is also a biblical monetary denomination, and brings to mind the New Testament story of the servants and the talents, and the poor servant who is “cast into the outer darkness, where there will be much weeping and gnashing of teeth.” And then there is, “present my true account,” and its hint of double entry bookkeeping. It is this expansiveness in language that is the key to Milton’s greatness. He is large; he contains multitudes. But they are bound in English, anodized, as it were, not separable. How do you work that magic in French? Or German? Or Japanese?

These things are untranslatable, and hence, Milton can never have the global currency of Shakespeare.

Or consider translating Chaucer from his own time to ours. The poetry — The sound of the words, phrases, sentences and stanzas — cannot hypnotize us as the original does. Yes, we get the sense, but we miss the art.

“And smale fowles maken melodye, that slepen all the night with open ye.”

Or imagine James Joyce in German. The melody is gone. “Stattlich rundlich Buck Mulligan…”

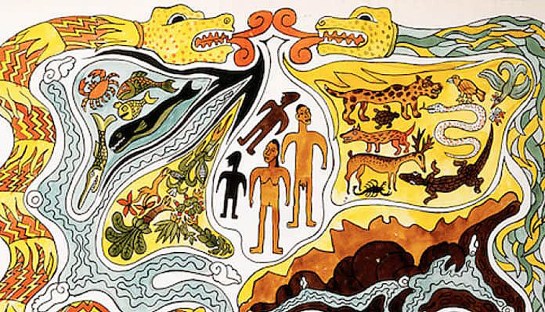

If I turn to a poet I love very deeply, and whose language I can parse, it survives translation very well. Pablo Neruda’s Spanish is so transparent, that the ideas embodied in it are clearly seen in any lingo. It is that Neruda’s primary concern in his poetry is not language, but experience. They are real pears and plums in his poetry, real life and death, real love, real sex, real toes and real stones. The poetry is about the things of this world, and not the way we express them.

The poetry is highly wrought, and in Spanish, there is a linguistic layer Neruda also cares about, but the power of the poems come from Neruda’s connection with his own life, his own experience, and that it is possible to share in any language.

“Quiero conocer este mundo,” “I want to know this world,” he says in his Bestiario/“Bestiary.”

“The spider is an engineer,/ a divine watchmaker./ For one fly more or less/ the foolish can detest them:/ I wish to speak with spiders./ I want them to weave me a star.”

Language is a mask. Behind it there is a world. You can concentrate on the language, or on the world. It is easy to be lulled into forgetting the difference, to think that words describe the world, and that the best language is the most accurate lens on the things of this world — este mundo — but they are not the same, but rather, parallel universes, and what works in words does not necessarily explain how the world functions. In reality, there are no nouns, no participles. There is only “is.” Can you squeeze that “is” through words? We try. And we try again.